FIMeval Project

The software page for the evaluation framework and related package information.

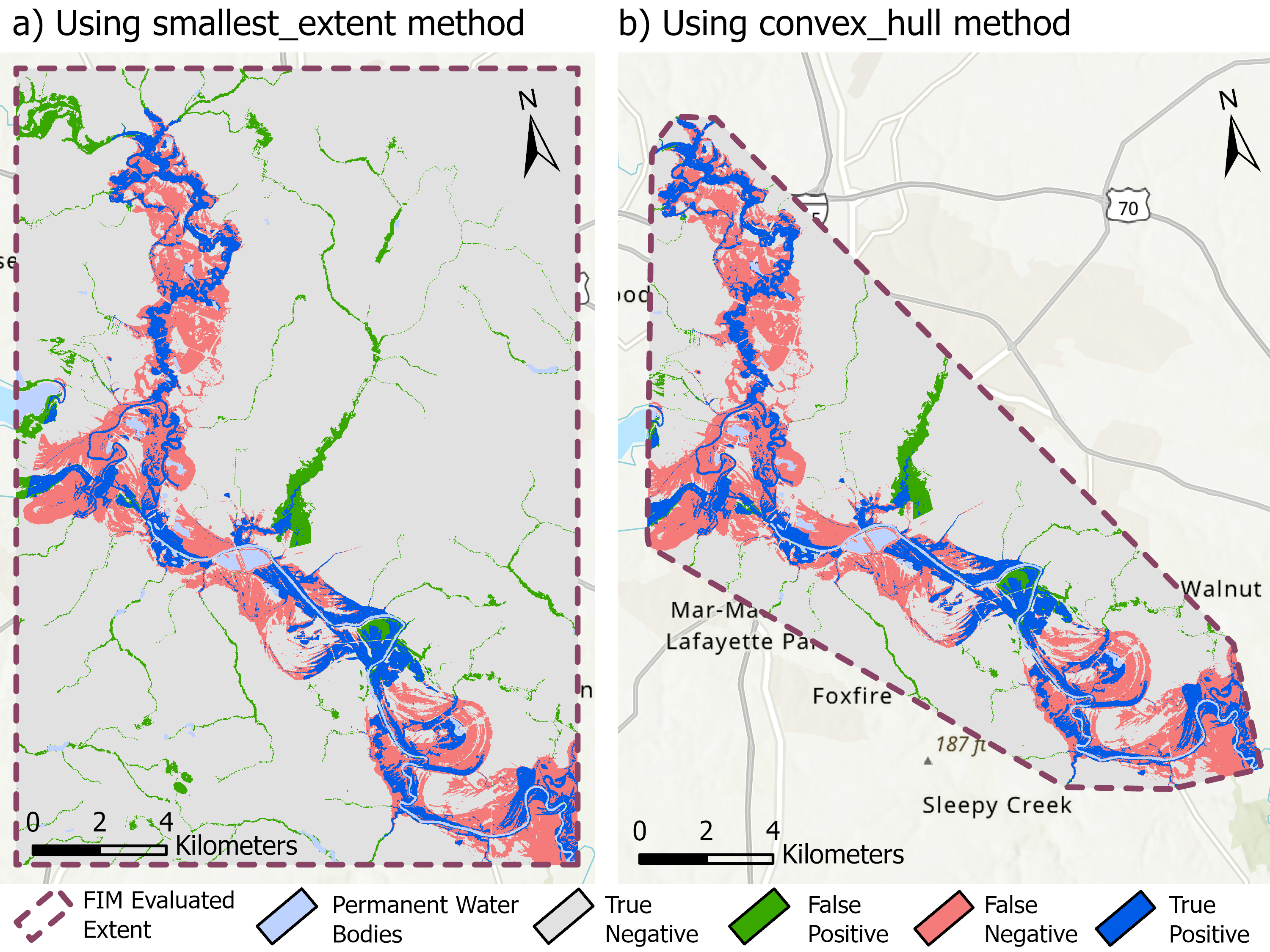

Read moreThis publication centers on evaluation methodology for flood inundation predictions across benchmark datasets. It complements the software stack by showing how prediction quality can be assessed consistently and reproducibly at scale.

The paper establishes a framework for comparing flood inundation predictions against benchmark databases. It is useful both as a scientific contribution and as a professional explanation of why the evaluation software in the project portfolio matters.

You can replace or extend this summary with the full abstract at any time.