GitHub

Source repository and examples.

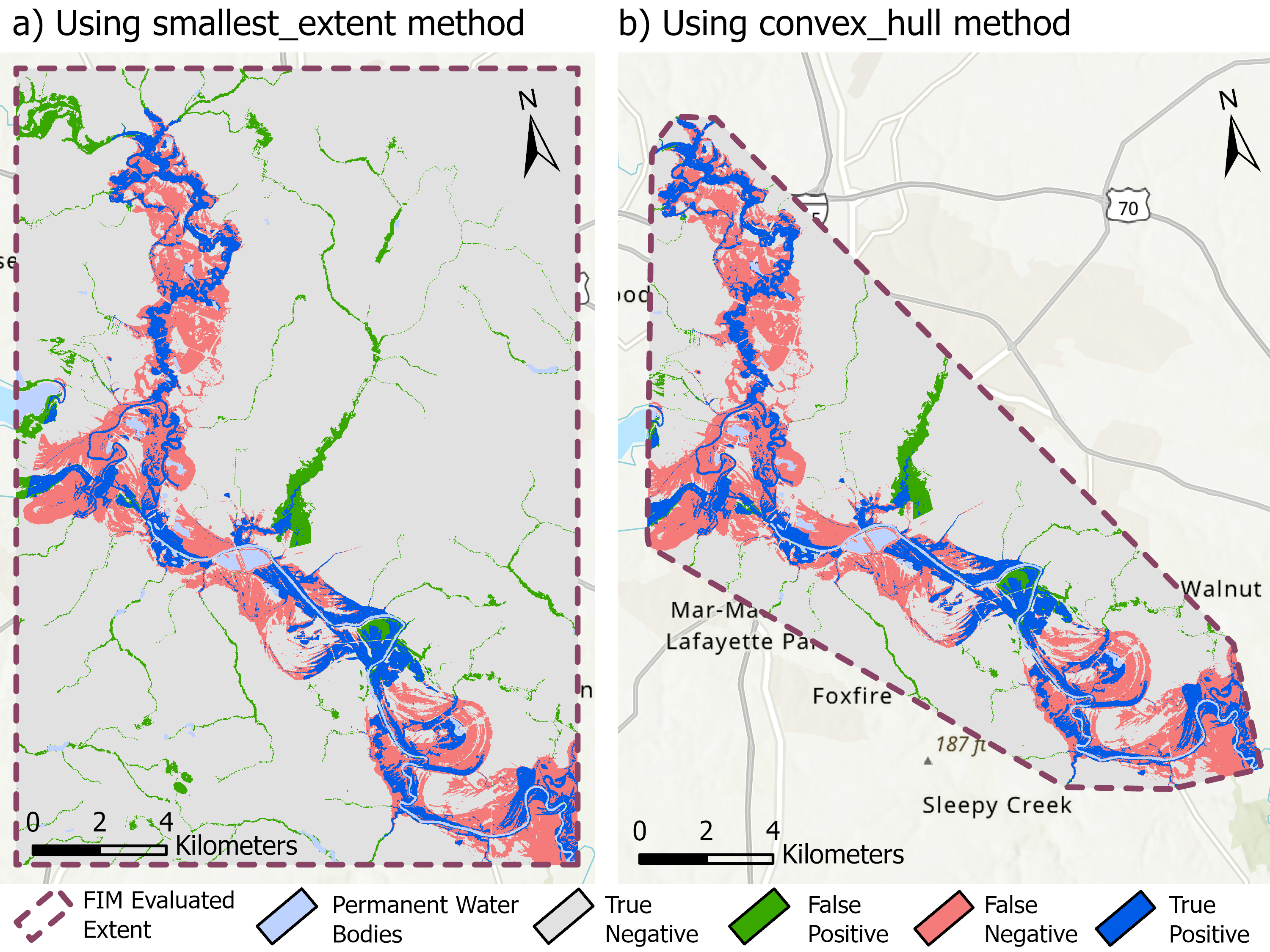

Open GitHubFIMeval is a framework for evaluating flood-inundation predictions over benchmark databases. It helps compare model outputs with reference products using standardized metrics and repeatable preprocessing logic.

Evaluation is where flood models become trustworthy. FIMeval makes comparison workflows more transparent, repeatable, and easier to connect to peer-reviewed research. With over 15,000 downloads, it has established itself as a community standard for systematic quality assurance across a wide range of flood scenarios.